Some days ago I stumbled upon this tweet and couldn’t stop thinking about it:

I just published #ViveTrack, an #opensource @DynamoBIM package for real-time #HTCVive tracking. Available for download from the pkg manager.

https://t.co/SUrgawnPZv#DynamoBIM #HTC #Vive #GenerativeDesign #Autodesk #Interaction #Design pic.twitter.com/cBweVRK8Pu— Jose Luis Garcia del Castillo (@garciadelcast) July 30, 2018

In our VR Center in Munich we have a lot of interesting usecases, but if I had to pick one to show you, it’d be the implementation of real-time tracking on a 2D drawing, which you can see in this video. This usecase is based on a professional ART system and Autodesk VRED.

But what if you want to realize it with more simple tools, like Revit and HTC Vive? I tested the ViveTrack Package for this usecase and the result is pretty amazing:

Definitely, there is a certain latency and the Vive system is not as accurate as a professional tracking system, but still it’s a very nice example for the power of Dynamo!

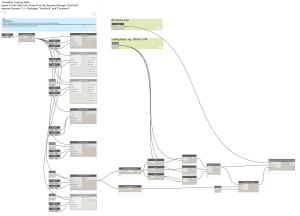

The most complex thing was to figure out how to scale the output in order to align the real world measures with the model, but actually once it was done I would say it’s pretty straight forward:  You can download the basic script incl. the comments here. If you have any questions or thoughts, just drop me a comment below!

You can download the basic script incl. the comments here. If you have any questions or thoughts, just drop me a comment below!

Update August 8:

Jose, the developer behind the ViveTrack Package, sent me a couple of notes which may increase the performance and which may be interesting for you out there as well:

- Rendering preview meshes is by far the most costly operation. Deactivate all mesh rendering and stick to just CS preview, it will run much better.

- Remove all Nodes for devices that you are not using. Even if frozen, they consume a lot of memory. In your case, you only need the one Tracker node (the lighthouses and HMD are handled by the system, no need to track hem yourself).

- Your script is very lightweight otherwise, these tips should allow you to crank the Periodic speed to 100ms at least.

- Also, for the repositioning of the camera, you can set the system’s origin with the Calibrate node in ViveTrack.

I’ll test do some more tests as soon as I get to and post an update on this project here! Please check out Jose’s Github page in the meanwhile for more references and information about this package and the related projects.

Hi Lejla,

This is absolutely amazing. I am doing my MSc project in architectural acoustics and I was looking for a way to send the HMD orientation information from Revit to PureData to create sound processing accordingly. And your experiment has shed light on what I was struggling with! Thanks.

Sahand